“Photogrammetry is the science of making measurements from photographs. It infers the geometry of a scene from a set of unordered photographs or videos. Photography is the projection of a 3D scene onto a 2D plane, losing depth information. The goal of photogrammetry is to reverse this process.”

I’ve been doing a lot of 3D modeling lately and, because I’m new to it, I have also been experimenting with finding workflows that I might be comfortable in.

For example a friend of mine models a lot in VR. She swears by it. Anyone I have asked has their own method which they are comfortable with. I’m trying to find my own…

I’ve heard a lot about the concept of using your phone to “scan” something in real life (and then turn it into a 3D object) but never really looked into it properly. It took some digging to eventually find out that this is called “Photogrammetry” and it’s widely used, especially for reconstructing environments.

It was a bit of a journey for me to find the best way to do it. Should I use an app for my phone? How would that work? Are they expensive? I want to use this for games, not for environments. There are so many unique cases for its use …Most of the recommendations for apps (if you google search) suggest mobile apps that are free to use, but you have to pay to unlock. Solutions for desktop software seemed to be the same, making photogammetry seem too expensive and too impractical for me… until I finally found Meshroom.

This program is a true blessing. Now that I got into it I cannot imagine myself using anything else. I’m writing this post to highlight some of the resources that helped me get into it… as well as some troubleshooting issues that I needed to figure out first.

You can download Meshroom from the AliceVision website…But I strongly urge you NOT to do this, and instead download it from the Meshroom github latest client release page here: https://github.com/openphotogrammetry/meshroomcl/releases

I decided to run the 0.9.0 Pre-release because I kept getting the error “Error: This program needs a CUDA Enabled GPU”

The older version of Meshroom requires you to have an NVIDIA graphics card. I nearly gave up on it for this reason (I don’t have one)… until I found a discussion where they were talking about that this is no longer a requirement in the newer version.

Running the Pre-release made the error stop, and I was able to comfortably turn my photos into 3D assets.

There was also another issue that I ran into, which almost made me give up because it was so random, none of the things I tried were working…

I kept getting “loadPairs: Impossible to read the specified file” and it would point to “imageMatches.txt”… Indicating that this file was not saving properly.

Eventually I found out that Avast was blocking Meshroom. Making an exception for Avast (or just completely loosing your shit and uninstalling it) fixed the problem.

It’s worth noting that virus scanners may get in the way.

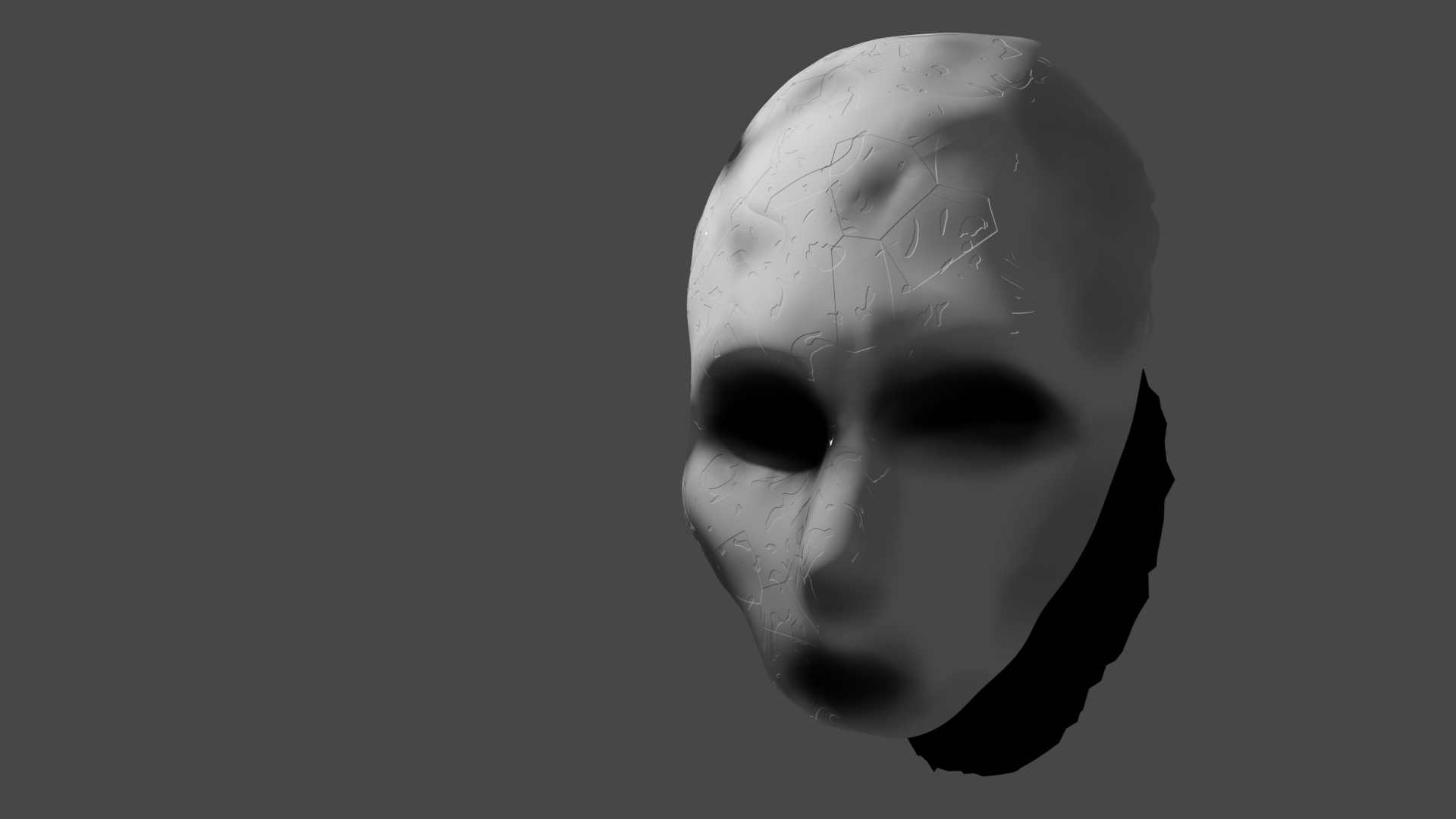

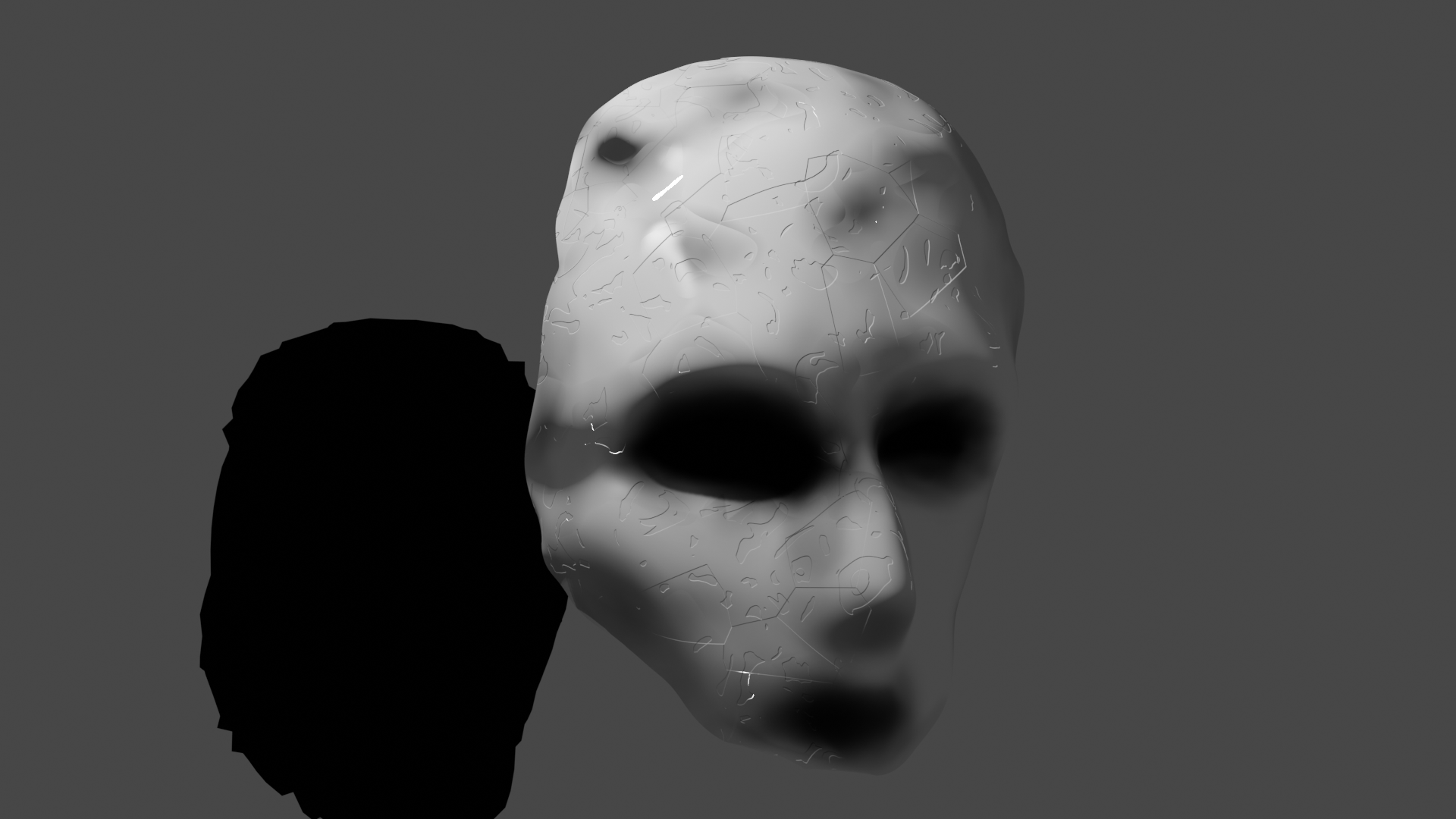

Once I got past these issues I took a set of very sloppy photos of my face (blurry, poor lighting, all of the mistakes!), using my phone… to quickly see if I could make a spooky “mask” model for a half a second jump-scare in the game I’m making.

I’m glad to say that the results were more than perfect:

They are good and spooky!

There are a couple of great tutorials that I found on Youtube that made the process of importing the generated .obj file from Meshroom to Blender less of an intimidating process.

Note that it can be messy, and you have to clean it up. This first tutorial walks you through the cleanup process. It’s incredibly simple. I highly recommend following along:

* Photogrammetry in Blender and Meshroom – Blender Tutorial

This one was also great:

* Quick Photogrammetry and Blender

Note that one bit of advice I kept getting was that the cleanup process can be a lot of work… So much that it’s impractical (this is old advice tho). I’m still new to Blender and even I didn’t think it was that bad once I understood these tutorials. Apparently support for this has gotten much better, and it’s a lot more practical to use for games.

I can’t imagine going back to anything else now. Even if you completely strip the detail from the generated object (reduce it a lot), just the basic of what is left gives you much more to work from.

I also find it novel to be able to capture these shadows of real life and put them into a game. It replicates my 2D workflow of using archival footage and “re-using” it as some glitchy relic, giving it a second life. It’s wonderful…

Photography for photogammetry can be a bit difficult to wrap your head around at first. There’s one video in particular that I found useful…

* 5 Common Mistakes when Photographing for Photogrammetry | CLICK 3D EP 1 | 3D Forensics CSI

Note tho that the specialty of this channel is in using photogammetry for crime scenes (kinda, not really, but yes…)… I’m recommending it because it was the most practical to me. Many of the learning resources out there are fairly esoteric. For example, I do not have a drone or intend to use one to capture a landscape to recreate… so this person’s walk through of how to photograph for it matched games the best.

That’s it… that’s the meat of this post. I’m really into exploring how to use this in my own work.

Also, here’s a humorous closing thought…

So the established use for photogammetry is to capture something in real life, and translate that into a 3D sense. I’m now wondering how practical it would be to do that in reverse. For example, using photomode in AAA 3D games, to copy objects in the 3D environments, and capture those as 3D models to use in your own work… a bit like a form “asset theft” meets asset swap.

Yes, I’m completely aware that this is a terrible idea and in line with a weird type of virtual theft… piracy maybe?? but… the idea sure made me laugh!

Edit: thanks to this person on Mastodon I now know that it was indeed a thing! The gist of this is amazing!

OGLE: The OpenGLExtractor

“The primary motivation for developing OGLE is to make available for re-use the 3D forms we see and interact with in our favorite 3D applications. Video gamers have a certain love affair with characters from their favorite games; animators may wish to reuse environments or objects from other applications or animations which don’t provide data-level access; architects could use this to bring 3D forms into their proposals and renderings; and digital fabrication technologies make it possible to automatically instantiate 3D objects in the real world.”

I just looked into it briefly but the old discussions surrounding it are interesting. I think I’m about to fall down a rabbit hole. Help.

Update: And thanks to coolpowers from Mastodon I also just learned about 3D Ripper DX. This entire concept fascinates me so much! It seems to speak to what 3D spaces, objects, constructs… mean to people. In a way it’s so meta. 3D copies reality and then these replications become meaningful enough to us that we make weird conceptual copies of these copies. It’s fascinating for how arbitrary this all is. Weirdly enough it also makes sense.